Sunday thoughts: When it comes to AI in education, are the facts of life traditional?

The DfE this week has issued a consultation on AI in education — the opportunities and risks it presents. This goes hot on the heels of the PM saying education is one of the fields he is most excited about when it comes to AI.

(As an aside, I have literally never felt so old as when having a discussion with some officials in government about this the other week. They were musing on when was the last time the government got involved in large scale roll out of technological platforms or materials, Covid laptops notwithstanding. The National Grid for Learning, I said! Blank faces. You know, the commitment to connect all schools to the internet, made in the 1997 manifesto, paid for by an effective levy on BT. The internet! Blank faces. I mean we hooked up 20,000 institutions via government initiative, using a whole new agency. Becta! Did…. you just think it happened automatically? Yes, their faces conveyed. Then I realised most of them were barely born in 1997, and the thought that the internet wouldn’t be in schools was a ridiculous concept. Still, it’s also nice to see commitments made in 1997 that for those without access to hardware or software, it would be provided by the state, including training for all teachers so they can master technology. Wonder how that all turned out).

Anyway, we at Public First have just finished some large scale polling on what people in the UK, and US, think about AI in education. With the caveat that the actual fieldwork was a couple of months ago now, and at the speed in which the debate is moving means that some of these numbers might shift, it’s remarkable — and yet perhaps not remarkable — how traditionalist people are on AI.

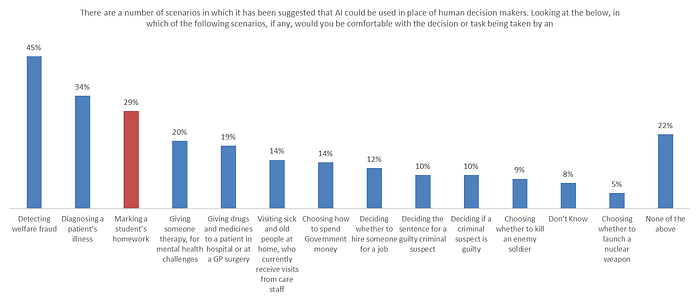

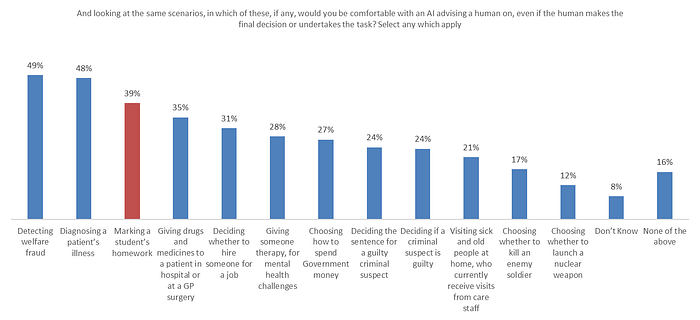

First finding — when you give people a list of things which AI could do instead of humans, or alongside humans, the British public is relatively comfortable with its use in education compare to some other areas:

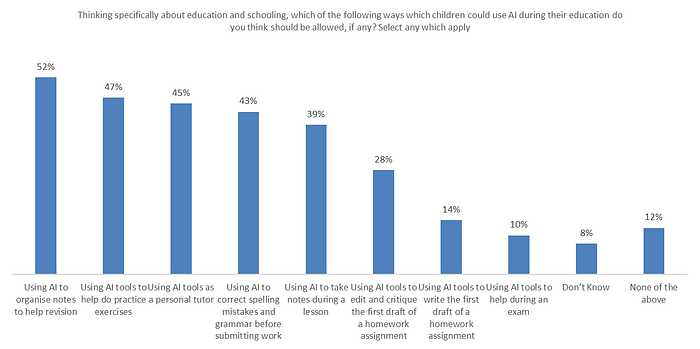

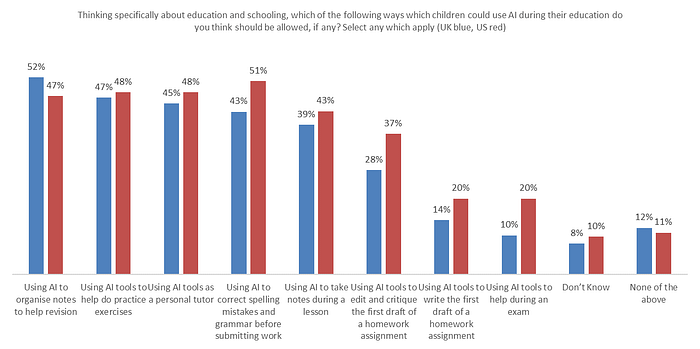

But second finding — when you start to dive into the more specific areas in which AI may be used in education, it’s remarkable how quickly caution and scepticism kicks in. Of the eight prompts we gave people, only one — using AI to help organise revision notes — got a majority in support.

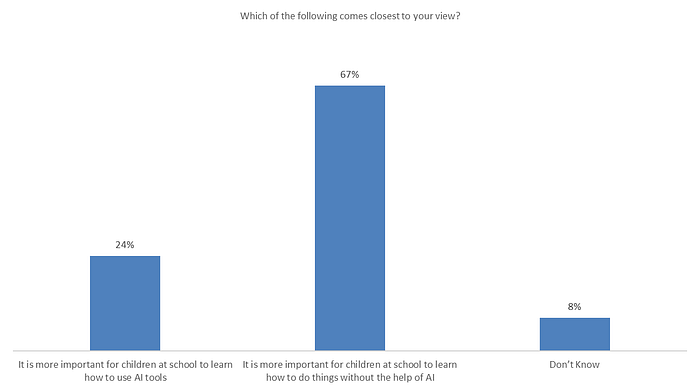

People debate whether children need to learn AI, or learn how to do things without AI. Of course, both are important. But to get beyond that unhelpful answer, we use a forced choice. And when specifically prompted on whether it is more important for children to learn how to use AI, or to do things without it — in other words, to master the underlying skill — the poll finds a very strong majority for the latter.

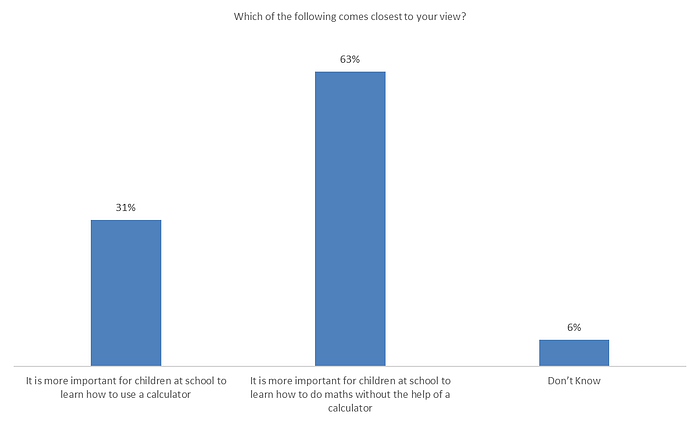

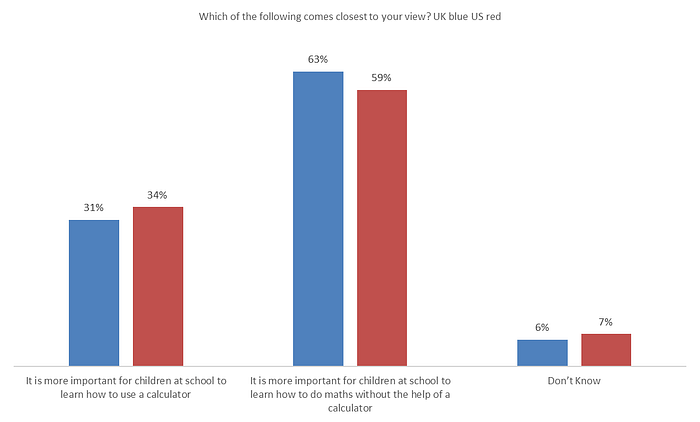

When checking whether this is recency bias, we ask about use of calculators — a technology so old that I take the role of my young officials, in that I can’t comprehend schooling without it.

Though when asked about the use of the internet in schools, opinion switches, with a majority thinking it is more important to use this technology to help schooling, than learn how to do without it.

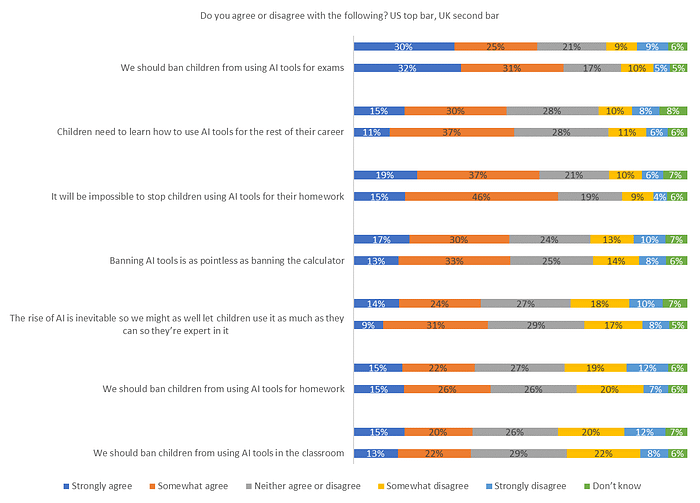

Next finding — when you ask people for their view on banning various elements of AI or technology, or acceding to it, the public splits. There is a clear majority, 63% behind banning AI in exams, but a real reluctance to ban it beyond that — perhaps because people recognise it will impossible to enforce a ban on AI for education outside of the classroom (61%), or because it will be needed in young people’s futures.

Looking at the US, next, it’s interesting that there’s a general sense of more positivity — some of these findings are close to the margin of error (+/- 2% with this sample) but generally speaking the US is more in favour of AI in most facets of schooling — most notably, twice as likely to say AI can be used in exams.

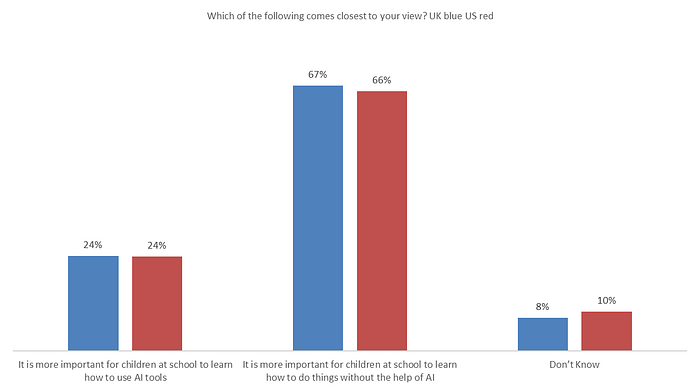

But they express almost identical sentiments when it comes to mastering technology versus mastering the underlying concepts.

And when comparing the views on the specific elements of what we should do with AI in education, the US public again more or less tracks the UK — actually being slightly less fatalistic that we could stop AI in homework, and (as per the above), keener on AI in exams.

There’s a whole load more detail in the poll, including the cheery finding that 1 in 3 people in the UK (32%) and almost 4 in 10 people in the US (37%) think that there’s at least a 1% chance that humanity will be wiped out by AI in the next century (and of the people saying it’s less likely than that, the most popular reason given is “because we’ll be wiped out by something else first like climate change or nuclear war”).

Aside from some fun findings, I think that the poll illustrates an important point. The debate on AI in education, like so many issues with technology, is often led by evangelists (teachers and non teachers). It’s important to recognise that most people, including parents, are a lot more cautious — and want children to master underlying concepts, rather than have a computer simply spit out the answer for them.

More broadly, one of the reasons I’m fascinated by this topic is that it isn’t remotely clear to me what government should do. The phrase du jour is “guardrails”, which seems sensible to me. But most of that applies at the developer end. What should DfE want specifically in schools? It’s clearly not going to develop products itself. It’s almost certainly not going to re-introduce Becta or something like it to oversee AI roll out. The general consensus has been for a long time that some schools are immature buyers of ed tech generally, and I’m sure that’s true (though larger MATs are resetting the balance), and so there’s a huge risk of snake oil in AI products coming down the line, but what in practice can DfE do about that?

I don’t have an easy answer, and I’m interested to what the consultation finds. The two most obvious things seem to me, aside from guardrails around AI ed tech products, is that DfE should think carefully about use of pupil data in AI (which is both a wonderful opportunity to have AI products learn on massive datasets around student learning in real time, and a huge personal privacy risk), and on evaluating the claims of ed tech AI products carefully — maybe with some sort of souped up role for the EEF.

But ultimately, in the absence of any real information in this fast moving space, the logical thing from a policy response is to maintain a watching brief, and be ready to respond where necessary, and not over-correct or shape — especially when, at heart, the British public wants to see a level of caution.